VERC: Particle Systems: The Theory Last edited 2 years ago2022-09-29 07:55:17 UTC

Particle systems are everywhere these days. Heck, they were pretty much everywhere 4 years ago; Half-Life has (an albeit very limited) particle system. With games like Unreal Tournament 2003 blowing us away with fancy new graphics, people wonder how all the flying dots and spewing lines actually work - and rightfully so. I feel that it's important to have an understanding of the game that you're currently playing so you can get a feel as to how much work has been put into it, and understanding some of the principles behind it is an important step.

What is a particle system, anyway? Simply put, it's something that spews out particles at set time intervals, and applies physical and graphical effects to those particles. Particles are rendered with a sprite, animated or non-animated, and every individual one has its own position, velocity, colour and transparency. They can be made to interact with their environment and objects within that environment - bouncing off walls, destroying glass, giving health to a player - and can have other effects added (such as trails). Simply put, they're damn powerful tools.

Let's take a look at the standard per-frame pipeline for one particle system:As you can see, each particle goes through a number of processes before rendering each frame, and it's these processes that give particles the characteristics of whatever they're being used as. Let's analyse the steps in detail.

We now enter the per-frame pipeline - each of the following operations are performed every frame until the particle is removed.

Particles can be made to collide with their environment and respond to that collision in various ways, but the most popular ones are (a) just killing the particle, or (b) bouncing. I won't give the formula to calculate the 3D reflection vector off a plane, but when it's applied to a particle, very interesting effects can be created. (If bouncing is implemented, then a "slowdown" factor is normally applied per-bounce to simulate the loss of kinetic energy as heat and sound energy like when a real object bounces, making the particle bounce less and less each time.)

Once all of the above has been done, the rest of the frame can be calculated - other live particles are updated, the world is rendered, object physics are updated - everything that a game needs to do in its pipeline.

The method presented here is by no means the rigid method that all particle systems follow, but they all have something fairly similar to this. Some may be more complex, others less so, depending on what they are going to be used for, but underneath it all, these are the simple processes that are applied to particles.

Hopefully this has been useful, enabling you to understand the principles behind some of the most stunning visual effects in today's (and tomorrow's) games. If there are any technical questions or something you don't understand, then feel free to contact me.

What is a particle system, anyway? Simply put, it's something that spews out particles at set time intervals, and applies physical and graphical effects to those particles. Particles are rendered with a sprite, animated or non-animated, and every individual one has its own position, velocity, colour and transparency. They can be made to interact with their environment and objects within that environment - bouncing off walls, destroying glass, giving health to a player - and can have other effects added (such as trails). Simply put, they're damn powerful tools.

Let's take a look at the standard per-frame pipeline for one particle system:As you can see, each particle goes through a number of processes before rendering each frame, and it's these processes that give particles the characteristics of whatever they're being used as. Let's analyse the steps in detail.

Spawn particle

A particle system, as mentioned earlier, spawns particles at specific time intervals. Every frame, a particle system will analyse how long it's been living and when the last particle was spawned to see whether it needs to spawn another particle - if this is the case, then a particle is created. At creation, a particle has a starting colour, position, velocity, and transparency assigned to it, which are then modified as the particle is updated every frame.We now enter the per-frame pipeline - each of the following operations are performed every frame until the particle is removed.

Update colour

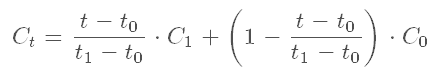

A particle doesn't have to have a fixed colour or transparency. To add visual flair, colour and transparency can be modulated over time, blending from one colour and transparency to the other over a set period of time. More than 1 modulation can be added, too, enabling a particle to blend its way through a spectrum of colours in a visually pleasing way. The following formula is used to blend between two colours (C0 and C1) between two time periods (t0 and t1) at time t:A similar formula is used to blend transparency.Update position/velocity

After updating the particle's colour and tranparency, attention can be turned to physics. A particle has a velocity (normally in units per second) which is applied to its position every frame (P = P + V * dt, where dt is the time delta between the last frame and the current frame). However, the velocity does not have to stay constant - gravity and air resistance can be applied to it to make the particle change its motion depending on its environment. The particle can also be made to accelerate by applying an acceleration factor to the velocity. All these factors combine to give a particle the possibility of very complex motion, and that's discounting collision detection.Particles can be made to collide with their environment and respond to that collision in various ways, but the most popular ones are (a) just killing the particle, or (b) bouncing. I won't give the formula to calculate the 3D reflection vector off a plane, but when it's applied to a particle, very interesting effects can be created. (If bouncing is implemented, then a "slowdown" factor is normally applied per-bounce to simulate the loss of kinetic energy as heat and sound energy like when a real object bounces, making the particle bounce less and less each time.)

Update lifespan

It's very important that a particle keeps track of how long it's been alive - how else will it know when it should die, after all? A particle already requires the time delta for its physics calculations, so no extra input is required - the time delta is simply added to the current lifespan. It's a simple, but vital step.Should it die?

A particle system normally has a "particle lifespan" factor specified. This determines exactly how long a particle will stay alive, and it is this which a particle compares its current lifespan to. If it's been alive longer than the specified maximum lifespan, then the particle has done its job, and is removed from the particle system's per-frame updates. However, if it doesn't need to die yet, then it goes to the next step.Rendering

If particles weren't rendered, we wouldn't know that they were doing things correctly - and, more importantly, we wouldn't see these wonderful visual effects they can produce! In a game, there are a variety of methods of rendering that can be used, but they normally involve throwing a quad (2 right-angled triangles with their hypotonuses touching each other) into the rendering API of choice (OpenGL or Direct3D). If it were being coded into Half-Life, the TriAPI (Triangle API - a small interface from the client DLL to the renderer) can be used.Once all of the above has been done, the rest of the frame can be calculated - other live particles are updated, the world is rendered, object physics are updated - everything that a game needs to do in its pipeline.

The method presented here is by no means the rigid method that all particle systems follow, but they all have something fairly similar to this. Some may be more complex, others less so, depending on what they are going to be used for, but underneath it all, these are the simple processes that are applied to particles.

Hopefully this has been useful, enabling you to understand the principles behind some of the most stunning visual effects in today's (and tomorrow's) games. If there are any technical questions or something you don't understand, then feel free to contact me.

- Article Credits

- Francis 'DeathWish' Woodhouse – Author

This article was originally published on Valve Editing Resource Collective (VERC).

The original URL of the article was http://collective.valve-erc.com/index.php?doc=1038061644-48607100.

The archived page is available here.

TWHL only publishes archived articles from defunct websites, or with permission.

For more information on TWHL's archiving efforts, please visit the

TWHL Archiving Project page.

Comments

You must log in to post a comment. You can login or register a new account.